Installment One - The Invitation

THE THINKING NETWORK

A documented journey in building autonomous intelligence from the ground up, written for the THT community who asked to understand AI from the inside out. No computer science degree required. Just curiosity and a willingness to travel.

Installment One: The Invitation

Some of you have been asking about AI.

Not the surface-level questions. Not which prompt to use or what job might disappear next. Deeper than that.

How does it actually work? What is it really doing underneath? Is there a way to understand it that doesn't require taking someone else's word for it?

I asked if you wanted me to explore it publicly. You said yes. So here we are.

That question deserves a real answer. Not a surface one.

I should tell you something before we begin. I live in the technical weeds. Configuration files. Protocol specifications. Network diagrams that mean nothing to most people outside this field. This series requires a different language. One that doesn't sacrifice accuracy for accessibility but doesn't hide behind complexity either. Finding that language is part of my growth here.

The Wood and the Bowl

I want to start somewhere most people don't.

Not with a language model. Not with a chatbot. Not with the polished, finished surface where most AI conversations begin and end.

I want to start with a network.

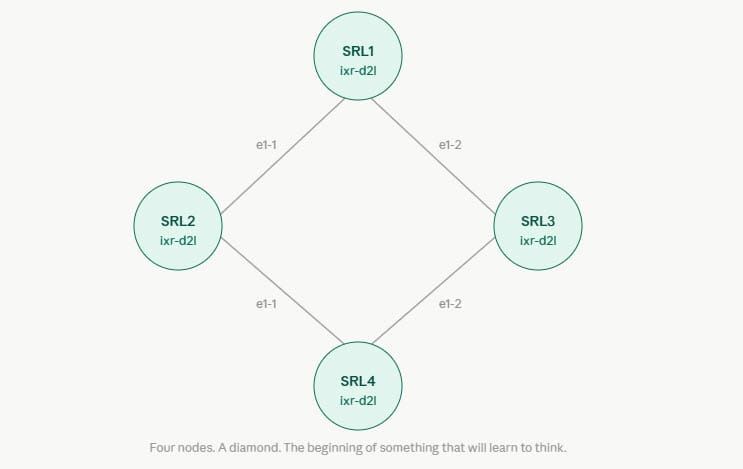

Specifically, a network I am building in my personal lab. Four nodes. A diamond topology. Open-source tooling running on repurposed hardware. A system learning, slowly, honestly, with regular failure, to sense its own environment, build a model of what normal looks like, and act on its own when reality departs from that model.

That is artificial intelligence. Not the surface. The wiring.

Starting here instead of with ChatGPT is a deliberate choice. ChatGPT is the finished bowl on the display stand. Beautiful. Functional. Immediately useful.

But if you only ever interact with finished bowls, you never understand wood. You never understand the tool. You never understand what the maker was actually doing, or why certain decisions get made at certain moments.

The lab is the wood. The wiring is visible here. The decisions are traceable.

When something fails, and things fail regularly, the failure is honest and informative rather than hidden behind a polished interface.

That kind of understanding transfers. Once you see how a network learns to think for itself, you see the same architecture everywhere. In language models. In recommendation systems. In the decisions a complex organization makes under pressure.

The material is different. The problem is the same.

Solving the Same Problem Twice

I have been training in martial arts since I was six years old. I am going on fifty-two. I have been working with networks for thirty-two years.

At some point during the design phase of this project, I realized I had been solving the same problem twice.

The problem is this: How does any system learn to think for itself?

Not execute instructions. Not follow preset rules. Actually orient to its environment, recognize patterns it has never explicitly been shown, and act with something that resembles judgment rather than reflex.

In training, that problem takes decades.

You begin reading straight lines. The straight lead in Jeet Kune Do, direct, economical, the shortest path between two points. Occam's razor in motion. It works until the attacks stop being straight lines. Until two things happen simultaneously. Until the context shifts faster than your training accounted for.

The Orient phase of the OODA loop is where accumulated experience lives. It is where pattern recognition happens below conscious thought. What takes years to understand is that Orient is everything. The rest follows from how well you see.

Networks have the same problem.

Reading Curves

A network can be taught to read straight lines. Given this input, predict this output. Useful for certain things. Honest about its limitations, if you know where to look.

But real networks don't fail in straight lines. They fail the way pressure comes. Curved, compounding, shaped by variables that weren't in the original model.

This series is about teaching a network to read curves.

I don't know exactly where the journey ends. That's the nature of research that is actually research rather than a demonstration. What I know is the direction. And I know that the questions this lab is asking are the same questions worth asking about any system that needs to think for itself.

Come along. This is going to take a while. It won't always go as planned. That's exactly how it should be.

Next installment: Before the First Command. What it actually takes to begin, and why the first obstacle was a thumb drive.